- Blog

- Pink Panther Cartoon Episodes Torrent

- Idm serial key 6-35 free

- Siemens plc s7 200 software free download

- Az yet last night mp3 download

- Hitachi serial number check

- Cara install printer epson l300

- Best android emulator for games on mac

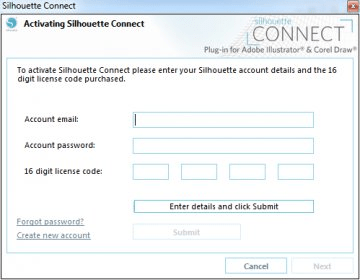

- Silhouette connect license code

- How to program overhead door model 551

- Descargar adobe audition 3-0 gratis en espa-ol para mac torrent

- Upcat form 1 and 2 download

- Musou orochi z save game editor

- Altium designer winter 2009 crack

- Vehicle repair satisfaction note template

- Snes emulator mac osx 10-12-6

- Kingdom hearts mac emulator

- Best zip for mac 2017

- Mac os 9-2-2 emulator

- Igi 3 the mark free download softonic

Therefore, the sum of distances is not useful for determining the optimal number of clusters.īy default, kmeans begins the clustering process using a randomly selected set of initial centroid locations.

For example, the sum of distances decreases from 2459.98 to 1771.1 to 1647.26 as the number of clusters increases from 3 to 4 to 5. Note that the sum of distances decreases as the number of clusters increases. Without knowing how many clusters are in the data, it is a good idea to experiment with a range of values for k, the number of clusters. Also, the average silhouette value for the five clusters is lower than the value for the four clusters.

The silhouette plot indicates that five is probably not the right number of clusters, because two clusters contain points with mostly low silhouette values, and the fifth cluster contains a few points with negative values. When performing k-means clustering, follow these best practices: Byĭefault, kmeans uses the k-means++ algorithm to initialize clusterĬentroids, and the squared Euclidean distance metric to determine distances. The cluster centroids and the maximum number of iterations for the algorithm. Kmeans for example, you can specify the initial values of You can control the details of the minimization using name-value pair arguments available to Kmeans computes centroid clusters differently for the Of the distances between the centroid and all member objects of the cluster. In each cluster, kmeans minimizes the sum K-means clustering is often more suitable than hierarchicalĮach cluster in a k-means partition consists of member objects and aĬentroid (or center). Also, k-means clustering creates a single level ofĬlusters, rather than a multilevel hierarchy of clusters. Unlike hierarchical clustering, k-means clustering operates onĪctual observations rather than the dissimilarity between every pair of observations The number of clusters k before clustering. Like manyĬlustering methods, k-means clustering requires you to specify You can choose a distance metric to use with The function finds a partition in which objects withinĮach cluster are as close to each other as possible, and as far from objects in Kmeans treats each observation in your data as an object The function kmeans partitions data into k mutually exclusiveĬlusters and returns the index of the cluster to which it assigns each observation. K-means clustering is a partitioning method. This topic provides an introduction to k-means clustering and anĮxample that uses the Statistics and Machine Learning Toolbox™ function kmeans to find the best clustering solutionįor a data set.

- Blog

- Pink Panther Cartoon Episodes Torrent

- Idm serial key 6-35 free

- Siemens plc s7 200 software free download

- Az yet last night mp3 download

- Hitachi serial number check

- Cara install printer epson l300

- Best android emulator for games on mac

- Silhouette connect license code

- How to program overhead door model 551

- Descargar adobe audition 3-0 gratis en espa-ol para mac torrent

- Upcat form 1 and 2 download

- Musou orochi z save game editor

- Altium designer winter 2009 crack

- Vehicle repair satisfaction note template

- Snes emulator mac osx 10-12-6

- Kingdom hearts mac emulator

- Best zip for mac 2017

- Mac os 9-2-2 emulator

- Igi 3 the mark free download softonic